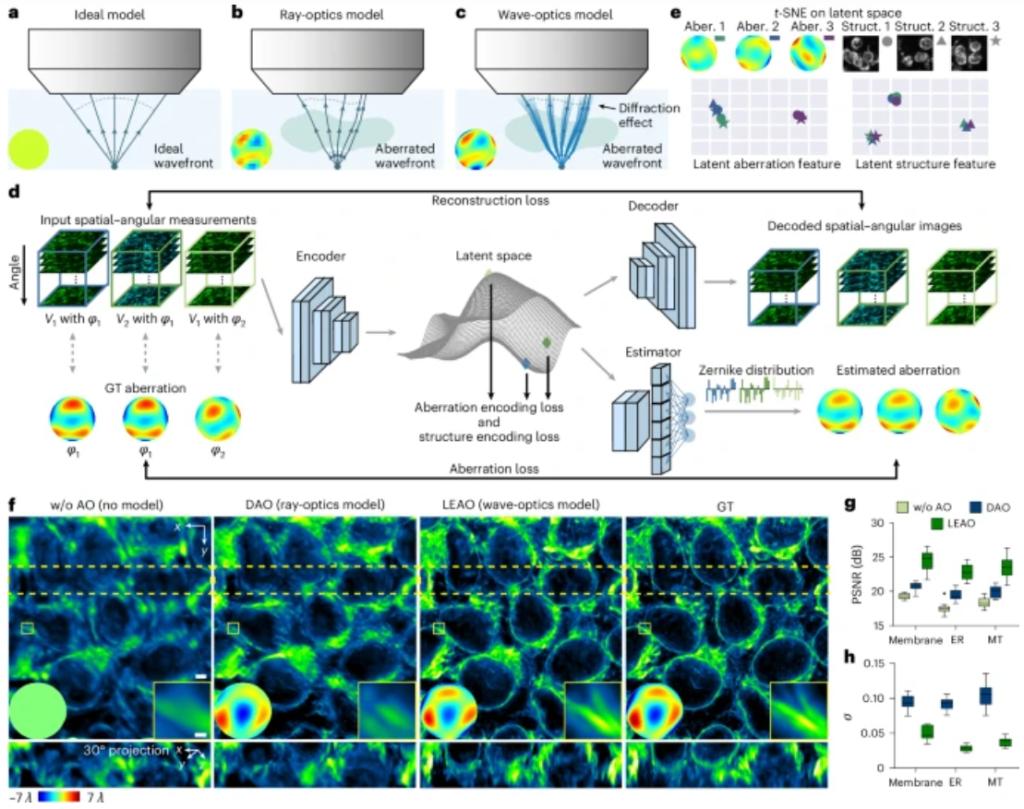

Nature Biotechnology 2026 | High-fidelity intravital imaging of biological dynamics with latent-s...

LEAO extracts wave-optics priors from spatial-angular data and disentangles them in latent space to improve aberration estimation accuracy and robustness across various challenging conditions, enabling high-fidelity intravital imaging.

Science 2026 | Deeper detection limits in astronomical imaging using self-supervised spatiotempor...

Astronomical Self-supervised Transformer-based Denoising (ASTERIS) uses spatiotemporal information across multiple exposures to deepen detection limits by 1.0 magnitude while preserving accuracy. Validated with deep JWST imaging data, it reveals faint structures and triples redshift ≳ 9 galaxy candidates than prior methods.

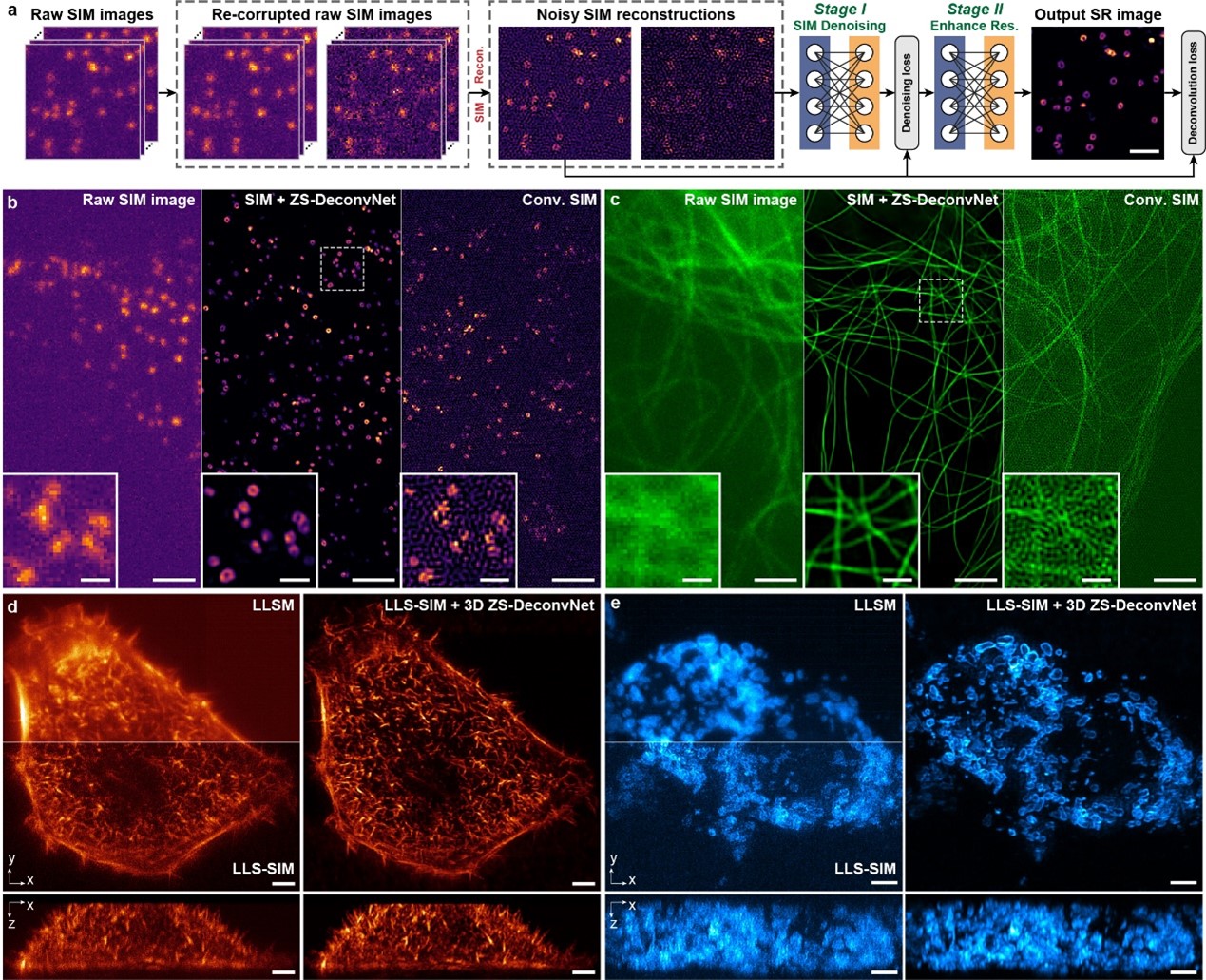

Nature Biotechnology 2025 | A neural network for long-term super-resolution imaging of live cells...

The Bayesian deformable phase-space alignment time-lapse image super-resolution (Bayesian DPA-TISR) neural network can adaptively enhance the cross-frame alignment in the phase domain and outperform existing state-of-the-art SISR and TISR models, which enables multi-color live-cell super-resolution imaging for more than 10,000 timepoints of various biological specimens with high fidelity, temporal consistency, and accurate confidence quantification.

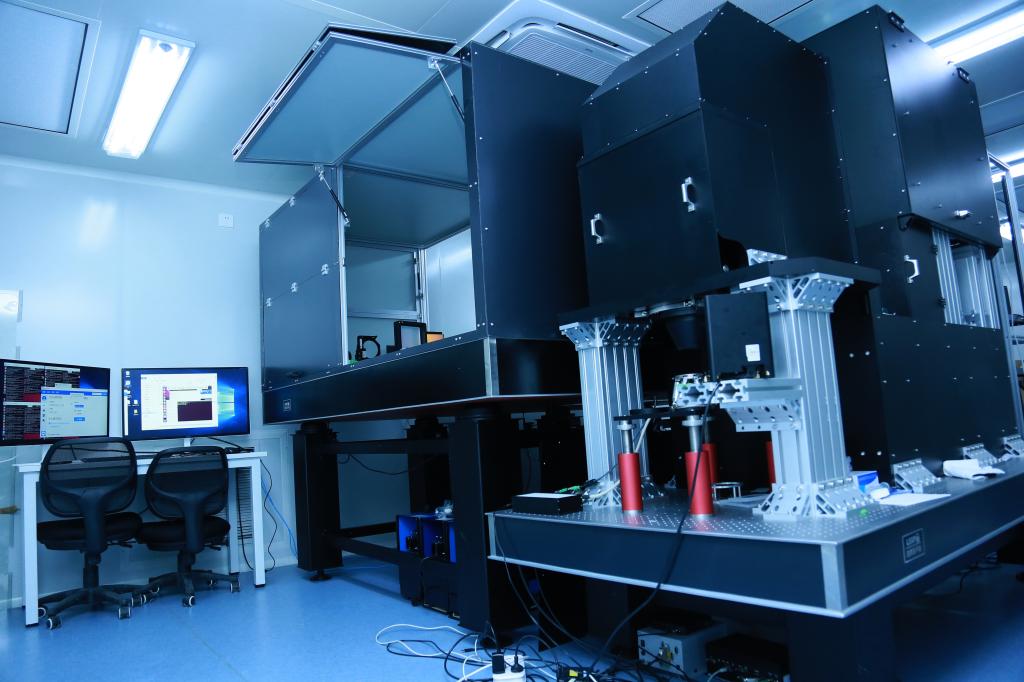

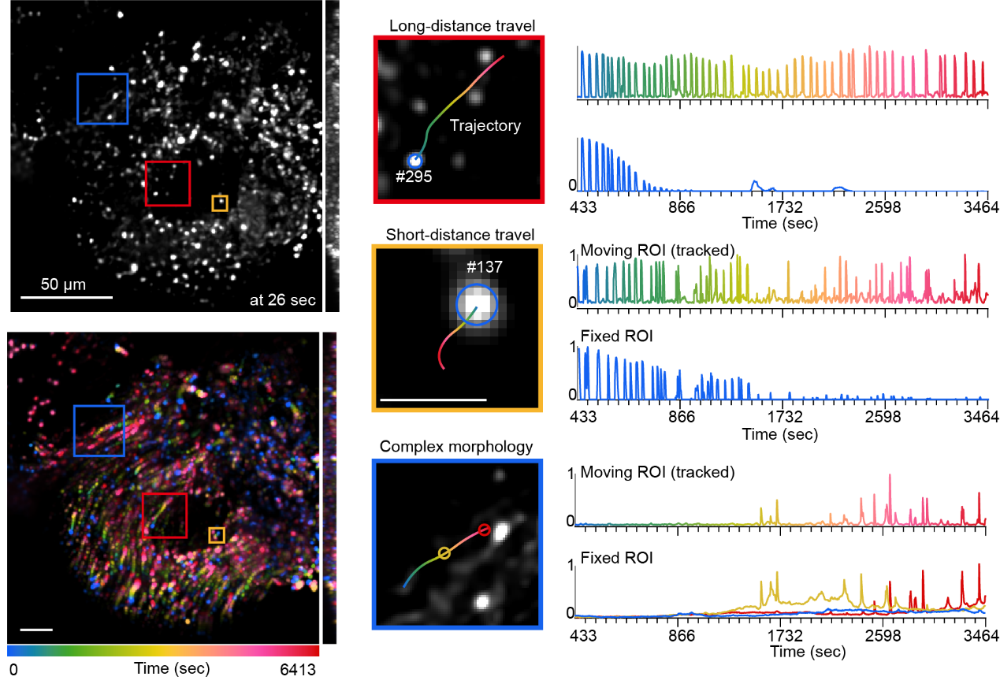

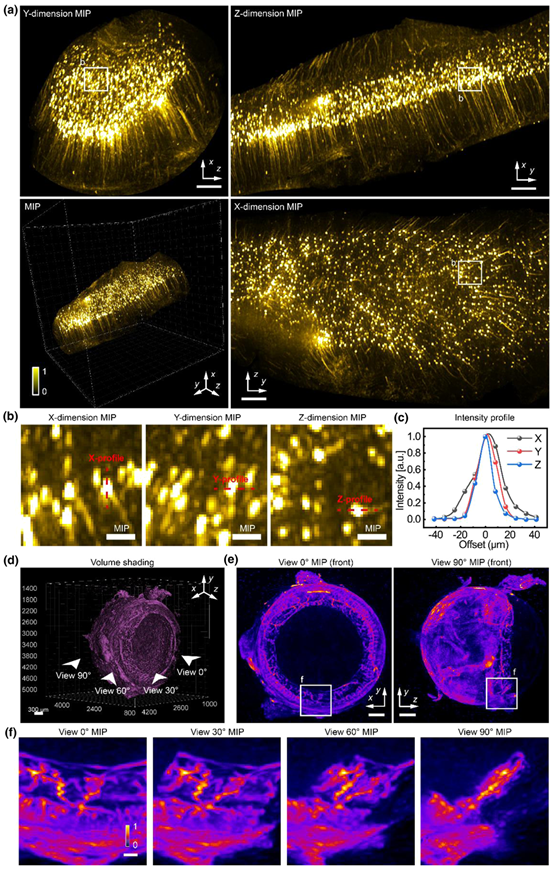

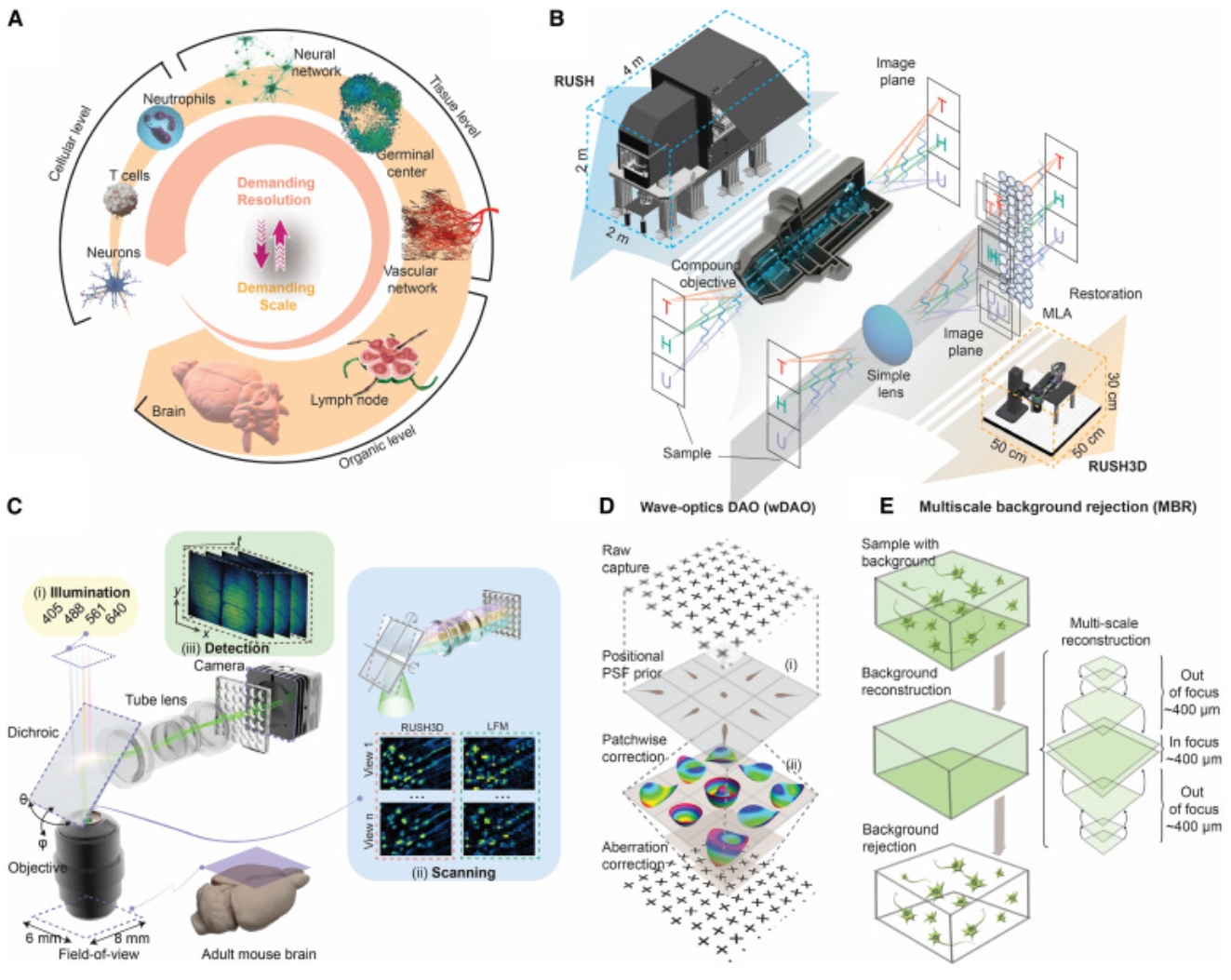

Cell 2024 | Long-term mesoscale imaging of 3D intercellular dynamics across a mammalian organ

RUSH3D, with integration of multiple computational imaging methods based on scanning light-field framework, facilitates long-term high-speed centimeter-wide mesoscale 3D imaging at single-cell resolution in a compact system for broad practical applications in understanding large-scale intercellular dynamics at the mammalian organ level.

Nature Photonics 2024 | Direct observation of atmospheric turbulence with a video-rate wide-field...

The Wide-field Wavefront Sensor (WISE) achieves the first video-rate detection and prediction of atmospheric turbulence distribution over a field of view exceeding 1100 arcseconds, nearly a thousand times larger than that of traditional adaptive optics. This breakthrough addresses the challenges of observing wide-field, anisoplanatic atmospheric turbulence, while offering high resolution, strong robustness, and plug-and-play capabilities.

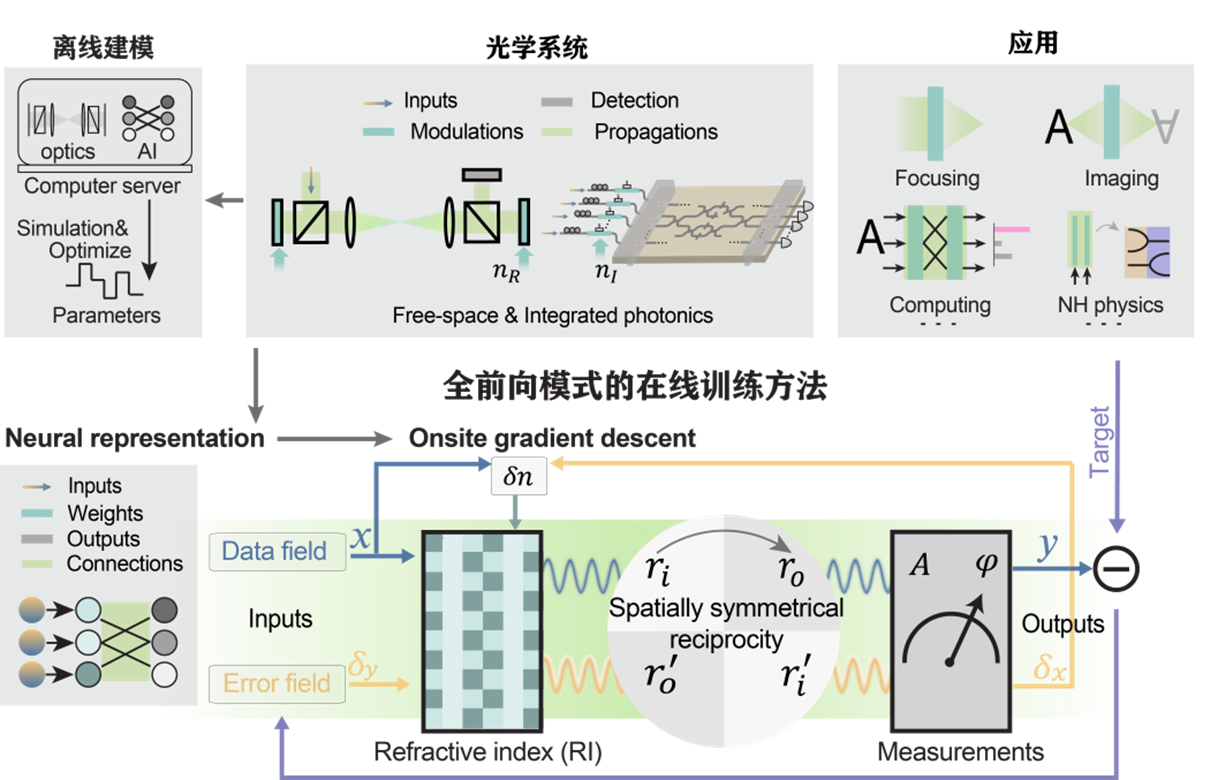

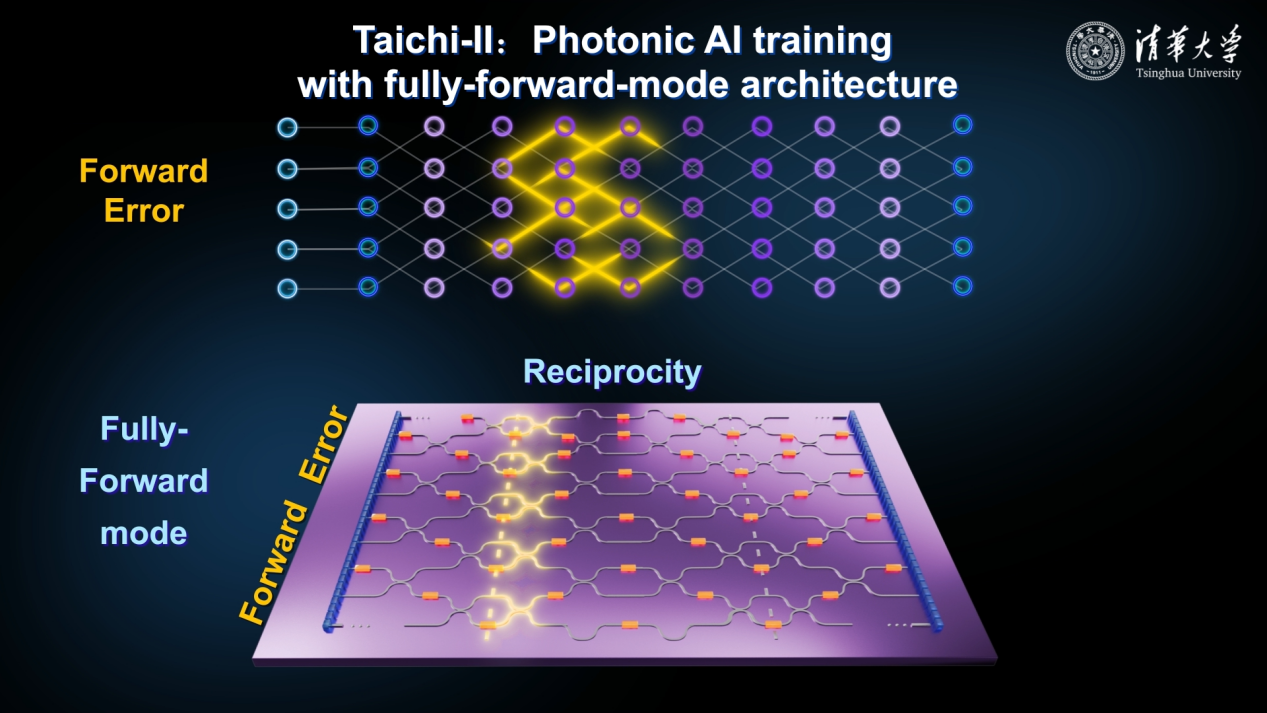

Nature 2024 | Fully forward mode training for optical neural networks

Fully forward mode learning maps optical systems to parameterized onsite neural networks, and enables self-learning guided by targets of applications. By leveraging spatial symmetry and Lorentz reciprocity, the necessity of backward propagation in the gradient descent training is eliminated. Consequently, the optical parameters can be self-designed directly on the original physical system.

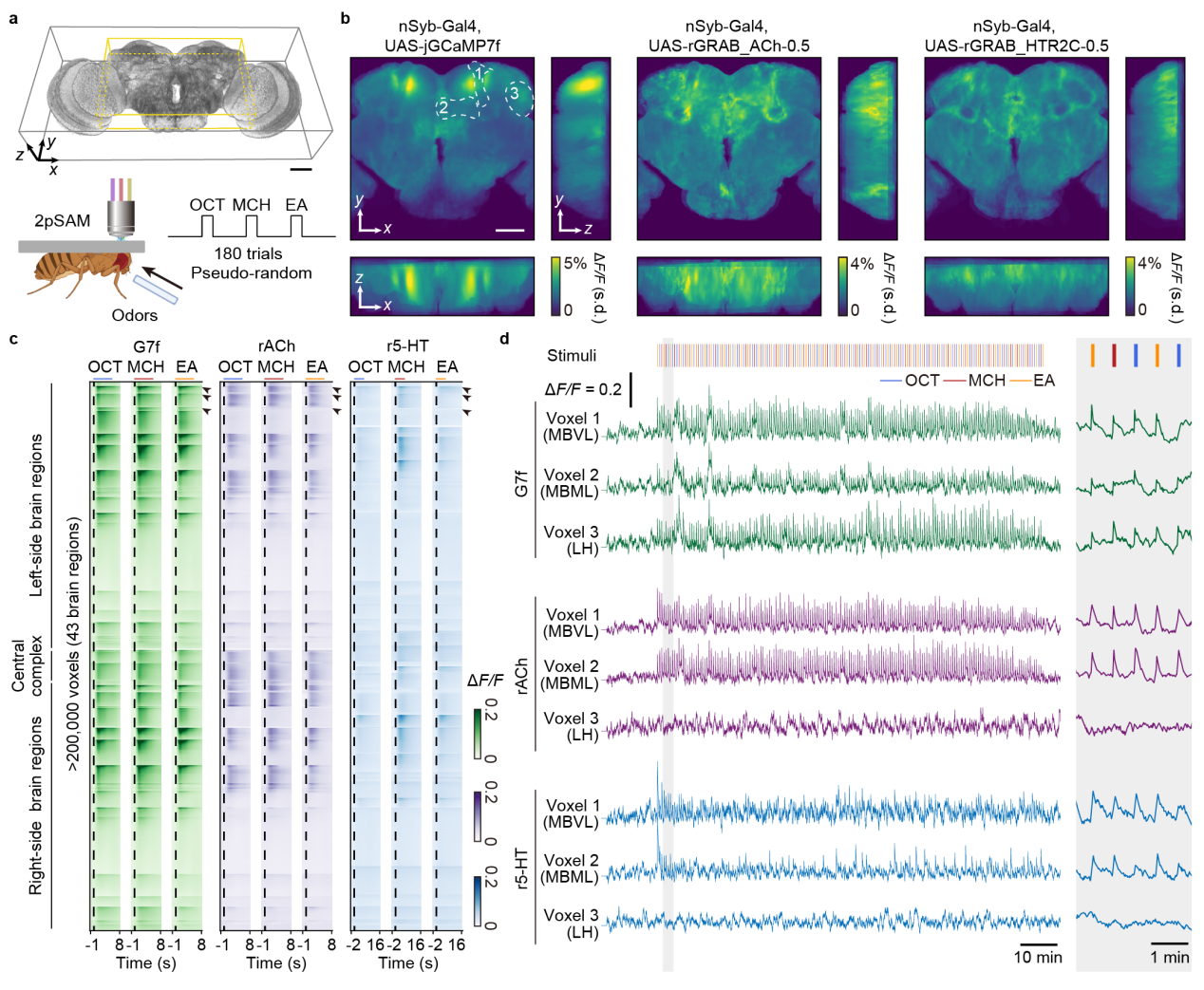

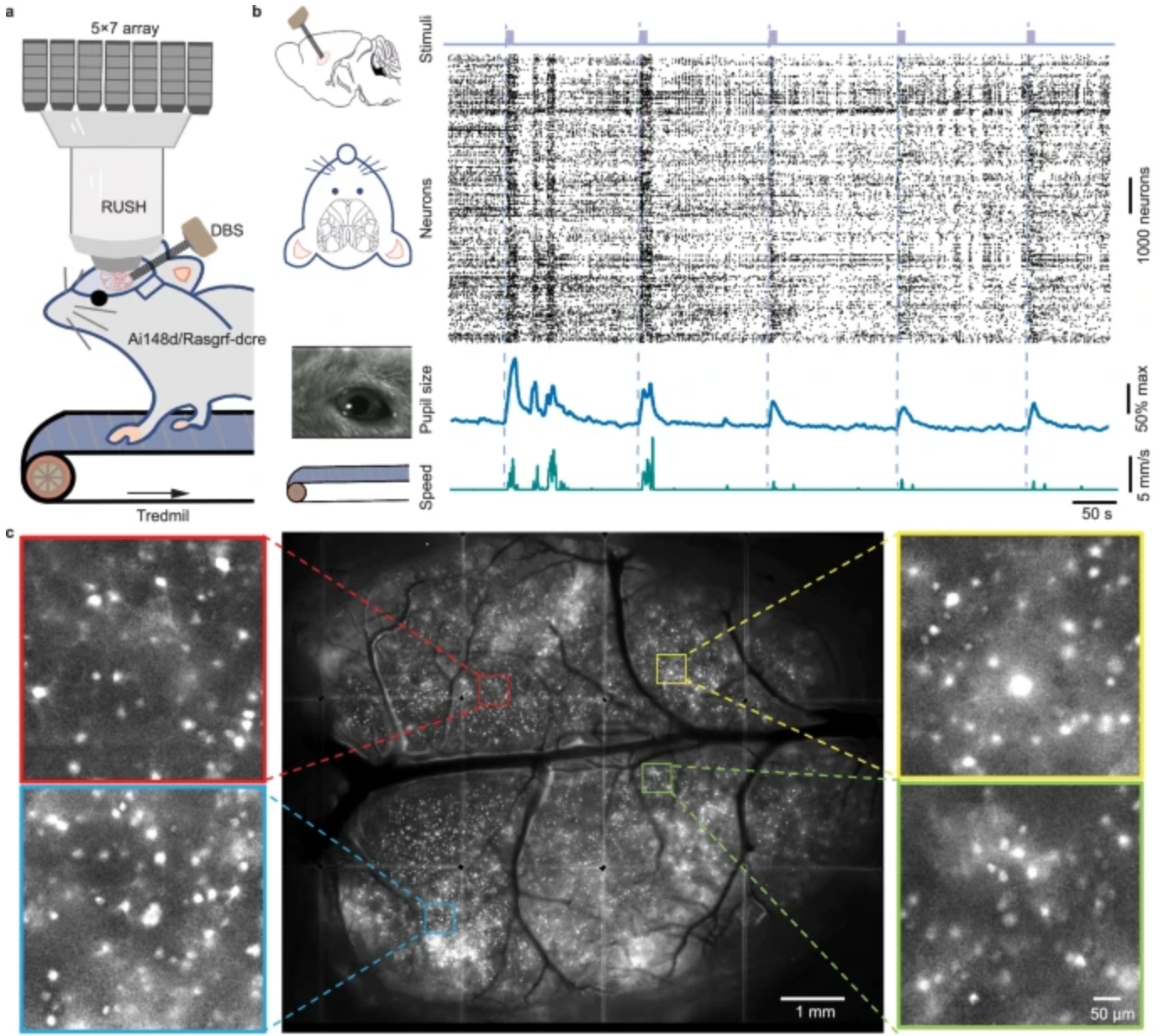

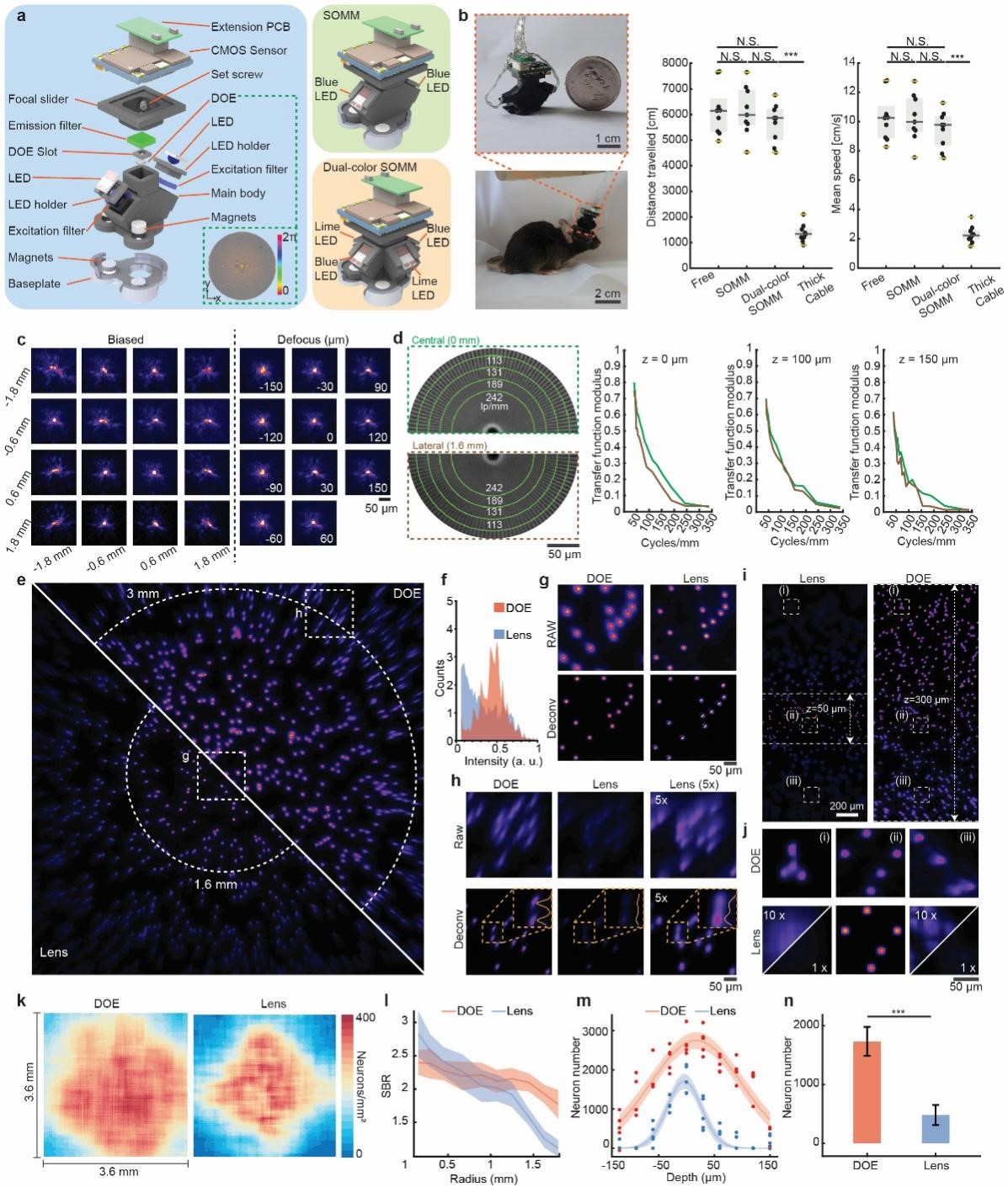

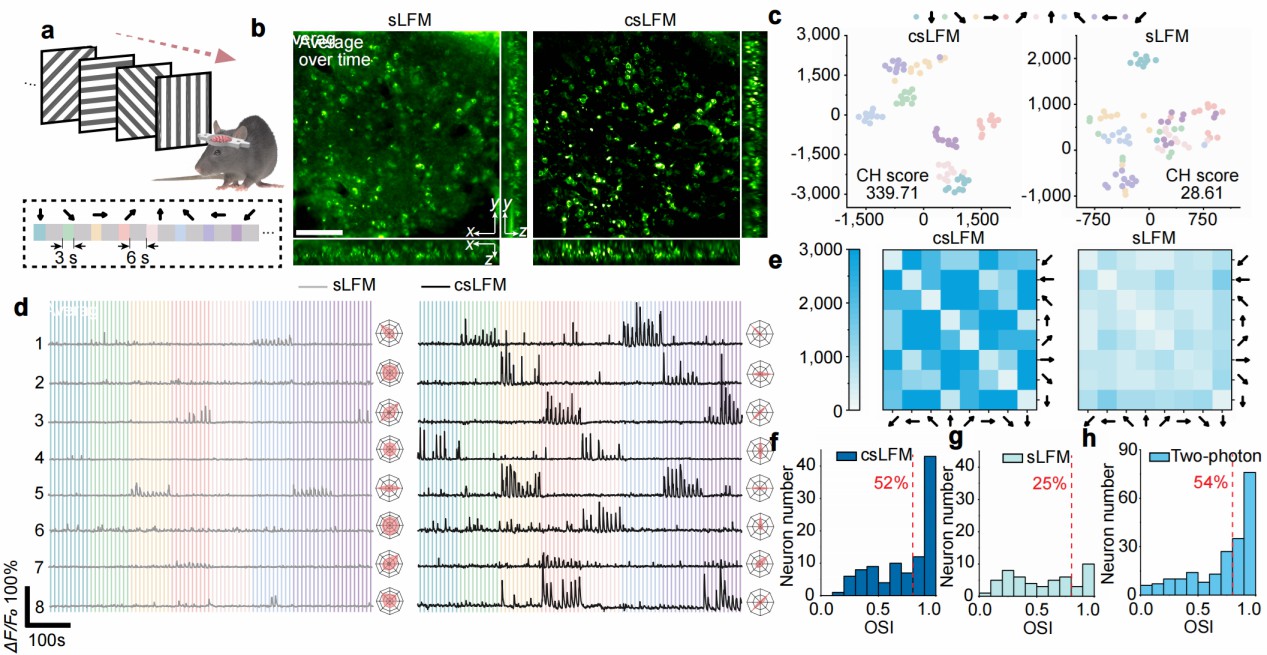

Nature Biotechnology 2024 | Long-term intravital subcellular imaging with confocal scanning light...

Harnessing the concept of line confocal and scanning light-filed imaging, csLFM offers background-suppressed 3D imaging of subcellular dynamics at a millisecond scale over long imaging duration volumes in vivo, with enhanced contrast to clearly distinguish fine structures in mammals.

Science 2024| Large-scale diffractive-interference Taichi photonic chiplets solve advanced AGI ta...

Rapid advances in artificial general intelligence (AGI) come with increased performance and energy efficiency requirements for next-generation computing. Photonic computing has the potential to achieve these goals, but despite attracting attention, current photonic integrated circuits have limited scale and computing capabilities, barely supporting modern AGI tasks. Xu et al. explored a distributed diffractive-interference hybrid photonic computing architecture to effectively increase the scale of the ONN to the million-neuron level. They experimentally realized an on-chip 13.96-million-neuron ONN for complex, thousand-category-level classification and AI-generated content tasks. The present work is a promising step toward real-world photonic computing, supporting various applications in AI.